Microsoft

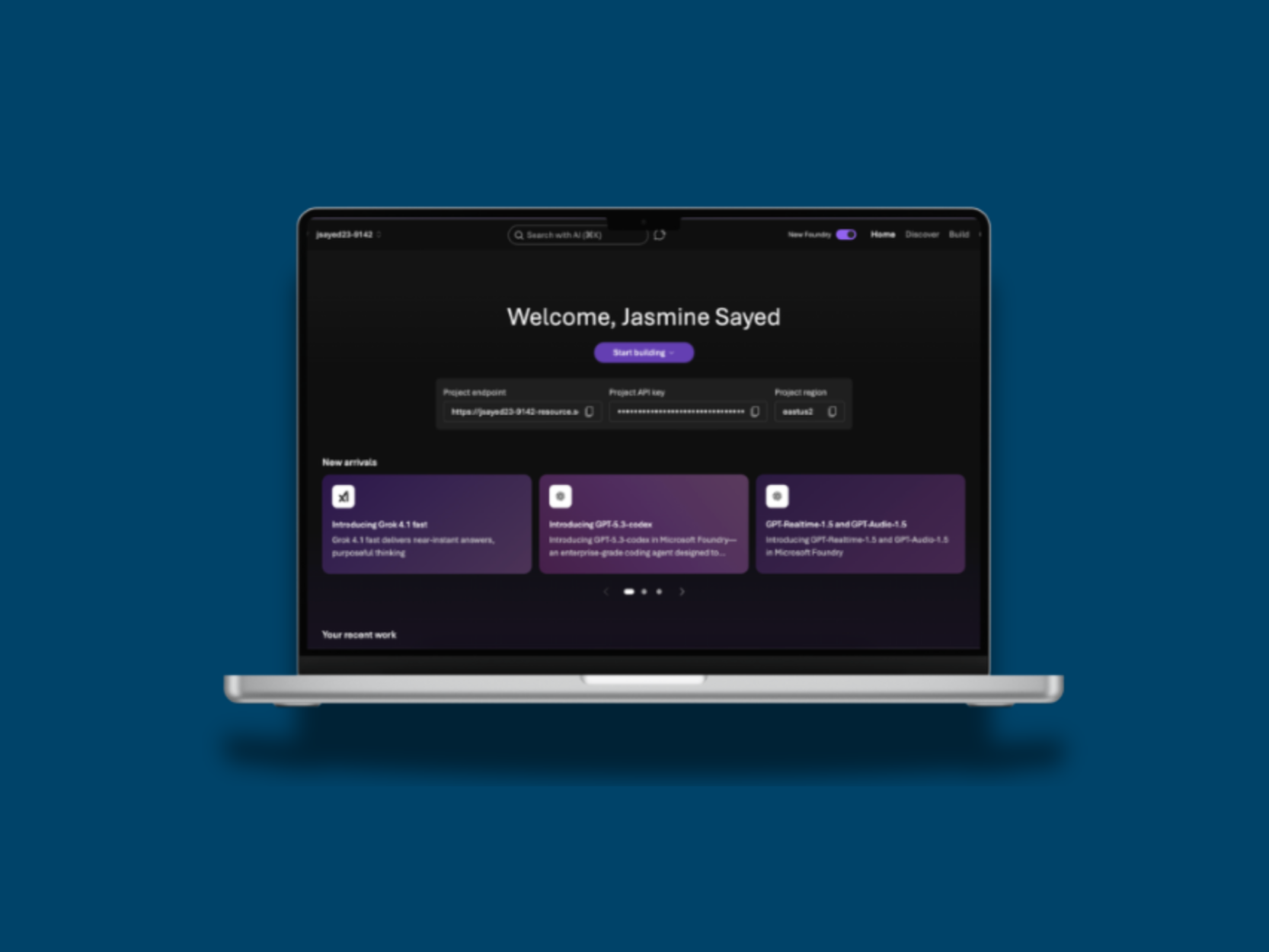

Working with Microsoft’s CoreAI team to improve the experience of Microsoft Foundry, an AI agent creation software platform.

INTRODUCTION

What did I do during this project?

From January to March 2026, I am working with Microsoft on Microsoft Foundry, collaborating with the CoreAI team to improve the developer experience of Microsoft’s AI platform. Microsoft Foundry enables students, startups, and developers to build and deploy AI agents for their own businesses and projects.

As part of the team, I partner closely with product managers, engineers, and researchers to identify usability gaps and refine workflows across the platform. Through this work, I contribute to making complex AI tooling more accessible, intuitive, and impactful for a diverse developer audience.

PROJECT OVERVIEW

My Role

User Experience Researcher

Team

1 manager, 4 user experience researchers

Timeline

January 2026 - March 2026

Tools

Azure AI Foundry, Figma

How can I improve the Azure AI Foundry agent creation experience for developers?

While specific details of this work remain in progress (the project concludes at the end of March 2026, and this case study will be updated upon completion), I currently drive the following research initiatives:

Leading and moderating usability studies to evaluate the agent-creation experience,

Conducting heuristic evaluations to proactively identify friction points and interaction inefficiencies across complex AI workflows

Designing and facilitating user interviews and task-based testing to uncover high-impact usability gaps and opportunity areas

Translating qualitative findings into prioritized, actionable UX recommendations aligned with product and engineering constraints

Presenting research insights and strategic recommendations to cross-functional stakeholders to influence design and roadmap decisions

At the end of this project, I will get the opportunity to present my final conclusions and actionable recommendations to the Core AI team, influencing the next phase of product design and strategy.

TAKEAWAYS

What I’ve learned so far

Moderating and observing usability tests develops empathy for users.

Leading and taking notes during usability tests allows me to see first-hand how users interact with the product and where they encounter pain points. Synthesizing these observations into actionable recommendations reinforces the importance of listening carefully, asking the right questions, and translating insights into design improvements that truly address user needs.

Heuristic evaluations help uncover usability gaps efficiently.

By conducting heuristic evaluations, I can quickly identify patterns of usability issues across the product. This process helps me provide structured, evidence-based feedback to designers and engineers, and supports decision-making that improves both functionality and user experience.

Synthesizing qualitative insights strengthens strategic recommendations.

Collecting data from interviews, task-based testing, and observations is only part of the process; translating these findings into clear, actionable UX recommendations ensures that research directly informs design and product strategy. Presenting these insights clearly helps cross-functional teams align and act on user-centered priorities.